score yourself as this is extremely trivial function print np.mean(clf. Oob_score=False, random_state=None, verbose=0, We demonstrate how interpretable random forest models can produce estimates of a set of (potentially correlated) malnutrition and poverty prevalence measures. Min_weight_fraction_leaf=0.0, n_estimators=10, n_jobs=1, Max_depth=None, max_features='auto', max_leaf_nodes=None, RandomForestClassifier(bootstrap=True, class_weight=None, criterion='gini', In particular you can use LabelEncoder > from sklearn.ensemble import RandomForestClassifier as RF PredValid <- predict(model4, ValidSet, type = "class")ĬonfusionMatrix(predValid, ValidSet$classes)įinal_prediction <-predict(model4, data2)ĬonfusionMatrix(final_prediction, as.Simply encode labels as integers and everything will work well. Train <- sample(nrow(data1), 0.7*nrow(data1), replace = FALSE) # training set : validation set = 70:30(random) Random forest models are widely used in many application domains due to their performance and the fact that their constituent decision trees carry clear. Here is the code: data1<-read.csv("train_data raw.csv",header = TRUE,ĭata2<-read.csv("predict_data raw.csv",header = TRUE, The datasets in this code can be downloaded from the link: Please see my code and datasets attached, anyone can help me to fix my code? Thanks a lot in advance. Random forest are a supervised Machine learning algorithm that is widely used in regression and classification problems by data scientists. Random forest are a supervised Machine learning algorithm that is widely used in regression and classification problems by data scientists. The data cannot have more levels than the reference". If I do not add this sentence, then it returens "Error in fault(final_prediction, as.factor(data2$classes)) :

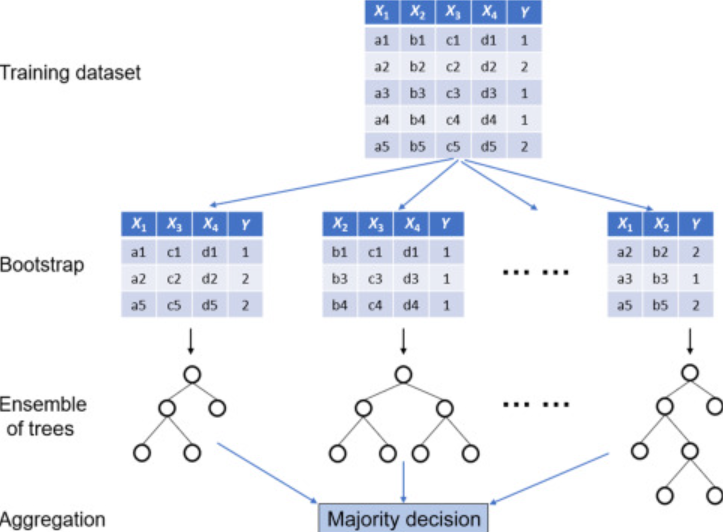

To match the levels, however, the problem still remains. I checked other solutions and added levels(data2$classes) <-levels(TrainSet$classes) I used "table()" to show the results, also returning "NA". When using random forests to predict a quantitative response, an important but often overlooked challenge is the determination of prediction intervals that will contain an unobserved response value with a specified probability. However, when I want to use "model4" to predict unknown samples in data2, the error came, where all the values in "prediction()" were "NA". Random forests are among the most popular machine learning techniques for prediction problems. The authors 8 investigated about supervised machine learning algorithms Naive Bayes, Random Forest and Decision Tree algorithm for prediction of anemia. Random forests RF 5 are a popular classification and regression ensemble method.The algorithm works by building multiple individual classifiers (or regression functions) and then aggregating them to make a final prediction. The "predict()" function works very well for data1 to predict validated dataset and returns an "average accuracy" of 97.4%. Random forest optimization Random forests. A random forest regression model can also be used for time series modelling and forecasting for achieving better results. Random forests showed some clinically important predictors that were not shown in the approach by regression analysis. A random forest is a meta estimator that fits a number of decision tree classifiers on various sub-samples of the dataset and uses averaging to improve the predictive accuracy and control over-fitting. Random forest is also one of the popularly used machine learning models which have a very good performance in the classification and regression tasks. The random forest model was built and I used Random Forest "model4" successfully in the training dataset, where "set.seed(1000)" and 70% samples were set as training and 30% samples set as validation. The prediction model based on the Random Forest method was able to predict the change in HbA1c with higher accuracy than that obtained with the regression analysis. The purpose of this code was to train the 1200 samples in data1 using the Random Forest model and to predict the unknown 203 samples in data2 to classify into four groups (ultramafic, mafic, intermediate and sediment). Subgroup analyses stratified by ML methods, sample size, age groups, and outcome definitions were conducted. I have another data set, named "data2", which has 203 samples with also 28 variables (elements) for prediction.

I have training data set, "data1" which has 1200 samples with 28 variables (elements) with labeled classes and the "classes" column has four classifications (ultramafic, mafic, intermediate, and sediment) and was set as factor. I am using Random Forest for prediction purposes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed